The Question Is Whether You Know About It?

Many business owners are confident on this one. "We don't use AI here." They say it at industry events. They put it in RFP responses. They believe it.

But walk over to any desk in the office. Look at the browser tabs. ChatGPT. Gemini. Copilot. Free versions, personal accounts, zero oversight.

The staff aren't waiting for a policy. They're already using AI to write emails, summarise documents, draft proposals and crunch data - every single day.

The business just doesn't know about it.

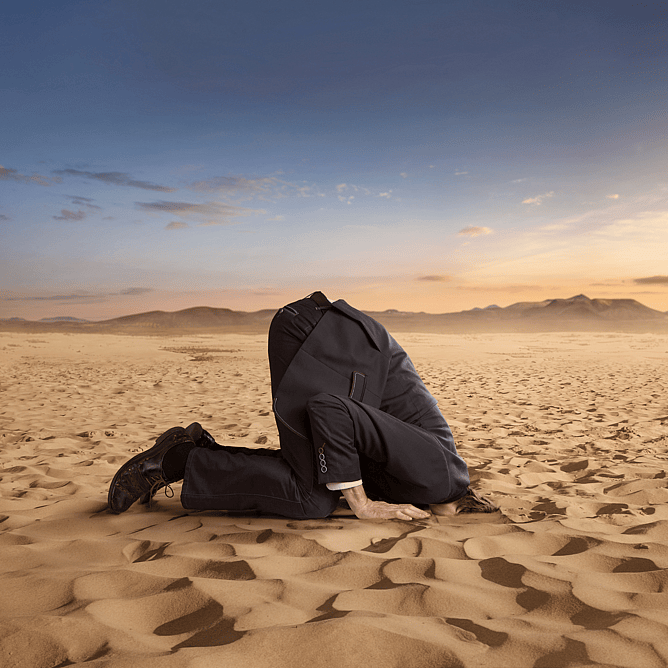

That's not a technology problem. It's a risk problem. And ignoring it doesn't make it go away. It makes it worse.

This post explains what's actually happening inside businesses that "Don't use AI" and have no AI policy, what it's costing them, and what a proper enterprise AI approach looks like.

The 'We Don't Use AI' Myth...

Let's be direct. When a business leader says their company doesn't use AI, what they usually mean is: "We haven't approved any AI tools."

Those are two very different statements.

Free AI tools are everywhere. They're fast, capable and require nothing more than an email address to access. Staff use them to get work done faster - and they do it without malice. They're not trying to cause problems. They just want to finish the report before lunch.

But "free" has a cost the business doesn't see until something goes wrong.

What Staff Are Actually Doing Right Now...

Here's a scenario that plays out constantly in businesses across New Zealand.

A staff member needs to draft a client proposal. It's Friday afternoon. They paste the client's brief - including their name, budget, business details and scope - into ChatGPT. They get a draft in 30 seconds. They clean it up, send it, and go home.

That client information is now in a third-party system. On a free plan. With no data processing agreement in place. With no visibility from the business.

It doesn't have to be dramatic to be serious. It just has to happen once.

Common examples of what staff do with free AI tools:

Paste confidential client data to generate reports or summaries

Upload internal documents to ask for analysis or rewrites

Use personal accounts - so the business has no access logs, no audit trail and no control

Share commercially sensitive information that now sits outside any business security boundary

The Real Risks of Doing Nothing...

Choosing not to have an AI policy is still a choice. And it comes with consequences.

Intellectual property leakage

When staff paste your internal processes, client data, pricing models or product information into a free AI tool, that content leaves your environment. Free-tier terms of service for most AI platforms do not guarantee your data won't be used to train future models. Your IP may effectively become someone else's training data.

Security exposure

Free AI tools don't integrate with your identity or access management systems. There's no MFA requirement tied to your business. No visibility into who accessed what. If a staff member uses a personal account, you have no record it ever happened.

No audit trail

If a client dispute arises - or a regulatory body asks what information was shared and with whom - you have nothing. No logs. No policies. No evidence of due diligence.

Employment agreement gaps

Most employment agreements written before 2023 say nothing about AI. That means staff have no clear guidance on what's permitted, no understanding of their obligations, and no consequences framework if something goes wrong. You can't enforce a rule that doesn't exist.

Reputational risk

If a client discovers their confidential information was processed through an unsanctioned third-party tool, that's a trust problem - regardless of what the law says about it.

Why an Enterprise AI Solution Changes the Picture...

An enterprise AI platform doesn't just give staff a better tool. It gives the business control.

Here's what changes when you move from 'no policy' to a managed AI environment:

Data stays in your environment.

Enterprise agreements include data processing terms. Your information doesn't train external models. You know exactly what's being processed and where.

Access is governed.

Staff log in with business credentials. You can see who's using the tool, when and for what. You can set permissions, restrict access and run usage reports.

Staff get a tool that actually works properly.

Enterprise AI connects to your systems - your documents, your data, your workflows. It does more than a free chatbot and does it safely.

You set the rules.

An AI usage policy, embedded in your employment agreements and supported by a proper platform, gives staff clear boundaries. That protects them as much as it protects the business.

You reduce liability.

A business that has documented its AI approach, trained its staff and put governance in place is in a very different legal and ethical position than one that buried its head in the sand.

What Good AI Governance Looks Like...

You don't need a 40-page policy to start. You need three things:

A clear AI usage policy - what tools are approved, what data can and can't be processed, and what staff must do before using any AI tool on company business.

Updated employment agreements - a clause that covers AI use, confidentiality obligations in an AI context and the business's expectations.

An approved platform - one enterprise AI tool that staff can actually use, that meets your security requirements and that you manage.

None of this requires a large IT department or a significant budget. It requires a decision.

The Cost of Waiting...

Every week you wait, staff keep using free tools. The data keeps leaving your environment. The audit trail stays empty.

And if something does go wrong - a data breach, a client complaint, a regulatory inquiry - "we didn't have a policy" is not a defence. It's an admission.

The businesses that handle AI well in the next few years won't be the ones with the most sophisticated tools. They'll be the ones that dealt with governance early, before they had a reason to.

CONCLUSION

Your staff aren't waiting for permission to use AI. They're already using it. The question is whether they're using it safely - or whether they're taking risks the business doesn't know about and can't control.

A proper enterprise AI approach fixes that. It protects your clients, your IP, your staff and your reputation.

And it's not as complicated as most businesses assume.

We are happy to have a chat, any time to help you through this transition.